Top 10 Semantic Layer Tools in 2026: The Definitive Comparison

The semantic layer has evolved from architectural curiosity to enterprise necessity. As organizations push AI deeper into decision-making workflows, the gap between raw data and trusted insights has become unsustainable. In 2026, semantic layers are now considered foundational infrastructure for any enterprise managing distributed data environments—no longer a “nice to have” but a prerequisite for scaling analytics and AI across the organization.

What is the difference between an analytics agents fabric and a semantic layer?

Read the BARC report on how to enable data analytics agents.

This analysis examines the leading semantic layer platforms, evaluating how each translates complex data models into unified, business-friendly definitions that enable consistent analytics across tools, teams, and critically—AI systems. The market has matured significantly, with platforms ranging from specialized standalone solutions virtualizing data across any source to integrated approaches embedded within data warehouses. Understanding the capabilities, tradeoffs, and optimal use cases for each platform is essential for data leaders designing analytics strategies that will sustain competitive advantage.

Understanding Semantic Layers: Translation Infrastructure for Modern Data

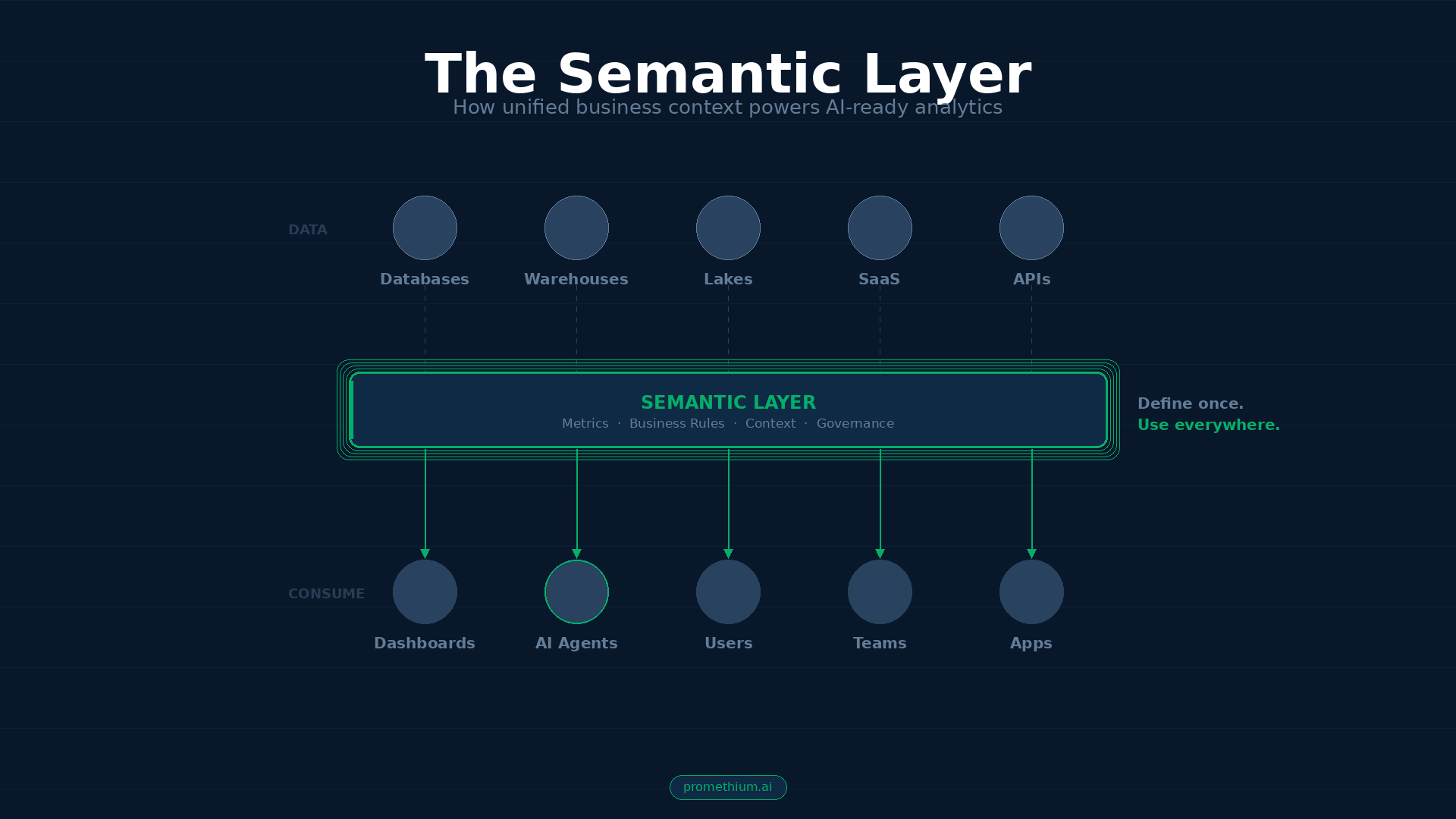

A semantic layer is fundamentally a translation layer sitting between raw data—scattered across databases, data lakes, warehouses, and SaaS applications—and the business users, analytics tools, and AI systems consuming that data. Rather than forcing users to navigate complex database schemas, understand join relationships, and memorize technical column names, a semantic layer provides an abstraction mapping technical structures to intuitive business concepts.

The core innovation is defining business logic, metrics, and relationships once, centrally, then reusing that definition everywhere—in dashboards, reports, APIs, embedded applications, and increasingly in AI agents and natural language interfaces. Organizations implementing semantic layers report achieving 4x faster time-to-insight as business stakeholders access consistent, pre-defined metrics without waiting for data engineers to construct custom queries.

The urgency around semantic layers intensified with large language models and AI-driven analytics. When organizations attempted natural language querying without semantic foundations, results proved disastrous. LLMs generating SQL against raw schemas produced syntactically correct queries that were semantically wrong—returning plausible-looking numbers diverging significantly from ground truth. Internal testing shows LLM accuracy improved from approximately 40% without a semantic layer to over 83% when grounded in governed semantic definitions. This dramatic improvement explains why enterprise AI initiatives increasingly require semantic layer infrastructure as prerequisite rather than optional component.

What is a context graph and why are they the next evolution of context engineering?

Get your comprehensive guide now.

Evaluation Criteria for Semantic Layer Selection

To properly compare semantic layer tools, we must establish dimensions reflecting both technical capabilities and business outcomes organizations care about when selecting infrastructure.

Metrics Definition and Dimensionality represents the first critical criterion. A semantic layer’s ability to capture complex business metrics—not just simple aggregations but ratios, derived metrics, distinct counts with proper multi-level aggregation handling, and metrics depending on other metrics—determines how well it represents real business logic. Simple tools may handle basic “sum of revenue” metrics but struggle with sophisticated calculations like customer lifetime value requiring complex formulas, historical adjustments, and weighted combinations across time periods.

Data Modeling Capabilities encompass the semantic layer’s ability to represent entities, relationships, hierarchies, and join paths across distributed data sources. A mature semantic layer supports dimensional modeling—organizing data around business entities (customers, products, orders) with associated dimensions (date hierarchies, geography, product categories). The layer must handle both simple star schemas and complex snowflake schemas, understand cardinality to prevent fan-out errors, and support many-to-many relationships elegantly.

Query Federation and Virtualization describes the semantic layer’s approach to accessing data without requiring duplication or movement. Pure virtualization approaches never materialize data, instead rewriting queries to execute against physical source tables. Others support materialization of pre-calculated aggregates for performance while virtualizing base-level data. Still others sit within a single warehouse and rely on the warehouse to execute all queries. Organizations with data spread across multiple clouds or on-premises systems typically require virtualization to avoid expensive data duplication.

Caching and Query Optimization evaluate how effectively the semantic layer reduces compute costs and improves query performance. Modern semantic layers employ multiple optimization techniques including intelligent caching of frequently accessed results, pre-computation of common aggregations, query result reuse, and semantic caching recognizing when two queries have similar meaning despite different surface forms. Organizations implementing semantic layers report reducing warehouse query costs by 30-70%, with some achieving greater reductions through careful aggregation strategies.

API Access and Integration indicates how flexibly the semantic layer connects to downstream tools. The best semantic layers support multiple API styles—SQL for legacy tools, GraphQL for modern applications, REST for web services—enabling any tool to access metrics without requiring tight coupling to a specific platform. Some provide JDBC drivers for compatibility with established BI tools, while others support embedding metrics directly into cloud storage as native objects.

Governance, Security, and Compliance encompasses the semantic layer’s ability to enforce who sees what data at row, column, and object levels; maintain audit trails; support regulatory requirements; and prevent data exposure. Modern semantic layers must support row-level security so regional managers see only their region’s data, column-level masking to protect personally identifiable information, and object-level security enabling federated data mesh architectures.

Leading Semantic Layer Platforms: Comprehensive Analysis

1. dbt Semantic Layer (MetricFlow)

The dbt Semantic Layer, powered by MetricFlow, represents the most widely adopted vendor-neutral semantic layer approach in 2026. dbt Labs positioned this tool squarely at data teams already using dbt for transformation, offering an integrated workflow where metric definitions live alongside data models in version-controlled YAML files.

The platform’s design philosophy treats semantic models as code artifacts subject to the same development practices as data transformations. Metrics, dimensions, and entities are defined in YAML files colocated with dbt models, enabling collaborative development through Git workflows, pull request reviews, and continuous integration pipelines. When a metric definition changes, the update goes through version control, enabling auditability and rollback capabilities.

From a metrics definition perspective, the dbt Semantic Layer supports measures (base-level aggregations), derived metrics (calculations depending on other metrics), simple metrics, and ratio metrics. Query performance data from internal dbt testing shows 83% accuracy on natural language queries when backed by the semantic layer, compared to approximately 40% accuracy when LLMs write raw SQL against undecorated tables.

The platform’s virtualization approach runs queries through MetricFlow, which generates optimized SQL executed against the underlying warehouse (Snowflake, BigQuery, Databricks, or ten-plus other supported platforms). This warehouse-agnostic architecture provides significant flexibility for organizations with multi-cloud strategies or planning platform migrations. Metrics defined once in dbt flow to any connected BI tool—Tableau Cloud, Power BI, Looker, and numerous others—through REST API, GraphQL, and SQL interfaces.

The limitation most often cited is that the dbt Semantic Layer requires dbt Cloud—the managed platform offering—rather than operating as a standalone tool for dbt Core users. Additionally, some organizations report a steeper learning curve compared to drag-and-drop BI-native semantic layers, as YAML authorship requires familiarity with semantic layer concepts before users can author definitions effectively.

2. AtScale Universal Semantic Layer

AtScale emerged as a leader in the 2025 GigaOm Radar Report for semantic layers and metric stores, recognized for both leadership position and rapid innovation. The platform positions itself as a universal semantic layer—vendor-agnostic infrastructure that works across any data warehouse (Snowflake, BigQuery, Databricks, Redshift, PostgreSQL, and others) and connects to any BI tool.

AtScale’s architecture centers on a semantic modeling environment where business analysts define business logic once, then operate a semantic query engine that transforms incoming queries into optimized warehouse queries. Rather than storing metrics in code files like dbt, AtScale provides a design canvas where analysts drag tables, define relationships, create conformed dimensions, and package these as reusable semantic models.

The platform’s performance optimization capabilities distinguish it in the competitive landscape. AtScale’s query optimizer automatically recognizes which pre-computed aggregates can answer incoming queries, rewrites queries to use materialized results when beneficial, and manages aggregate creation and maintenance automatically. Organizations report achieving sub-second query performance across billions of rows without requiring data duplication. In retail analytics use cases, AtScale customers report 80% of queries completing in under one second after implementation.

From a governance perspective, AtScale provides sophisticated fine-grained access control including row-level security (filtering so users see only data they’re authorized for), column-level security (masking or hiding sensitive fields), and object-level security (enabling data mesh architectures where different teams own different semantic components). These capabilities make AtScale particularly suitable for large enterprises with complex organizational structures, regulatory requirements, and federated governance models.

AtScale’s support for the Model Context Protocol (MCP) positions it well for AI integration. The platform exposes semantic definitions through an MCP server, allowing AI agents like Claude, ChatGPT, or custom LLM applications to discover and query semantic models without custom integration work.

The limitation most frequently cited is that AtScale represents an additional infrastructure component requiring separate deployment, management, and operational expertise. Organizations must evaluate whether the benefits justify the operational overhead of running another platform.

3. Snowflake Semantic Views

In early 2026, Snowflake announced Semantic View Autopilot, a significant evolution of its native semantic views capabilities that moved from complex manual modeling to AI-powered automated generation. Snowflake’s approach differs fundamentally from standalone semantic layer platforms—the layer is native to the data warehouse itself, eliminating the separate infrastructure requirement while ensuring tight integration with Snowflake’s query engine.

Semantic Views in Snowflake enable organizations to define logical tables representing business entities (customers, orders, products), then combine these to create metrics and dimensions. The YAML-based definition format creates semantic views as first-class Snowflake objects stored in the catalog alongside tables and views. Performance tends to be excellent for Snowflake-native organizations since the query engine understands semantic views natively rather than rewriting queries from an external system.

The major innovation in 2026 is Semantic View Autopilot, which uses machine learning to automatically discover metric definitions from warehouse metadata and BI tool usage patterns. Rather than requiring data teams to manually define every metric, Autopilot analyzes how metrics are currently calculated across connected BI tools (Looker, dbt Labs, Sigma, ThoughtSpot) and proposes semantic view definitions. This automation dramatically accelerates time-to-value, reducing what typically takes days to minutes in many cases.

For organizations standardized on Snowflake, semantic views offer compelling advantages. The integration is seamless, the performance is excellent, and the operational overhead is minimal. Cortex Analyst, Snowflake’s natural language interface, leverages semantic view definitions to improve LLM accuracy, achieving significant improvements in data query accuracy by grounding responses in approved business definitions.

The limitation is that Snowflake Semantic Views only function within the Snowflake ecosystem. Organizations with multi-warehouse strategies, requirements to query data outside Snowflake, or needs to maintain warehouse-agnostic metric definitions will find Snowflake’s native approach insufficient.

4. Cube: Open-Source Semantic Layer at Scale

Cube emerged in 2026 as the leading open-source semantic layer platform, offering a distinctive combination of code-first development, strong API support, and proven scalability for organizations building analytics applications. Unlike dbt (which requires dbt Cloud for semantic layer capabilities) or proprietary platforms, Cube maintains a fully open-source core that organizations can deploy on-premises, in private clouds, or through Cube Cloud’s managed offering.

Cube’s architecture centers on cubes and views, objects that parallel star schema dimensions and facts. Cubes represent business entities and contain measures (aggregations) and dimensions (attributes for grouping). Views represent joins and relationships between cubes, enabling dimensional analysis. The code-first development model means cubes are defined in JavaScript or YAML, version-controlled alongside application code, and deployed through continuous integration pipelines.

From an API perspective, Cube offers exceptional breadth: REST, GraphQL, SQL, and Python APIs provide flexibility in how consuming applications interact with metrics. This API-first design makes Cube particularly appealing for organizations building custom analytics applications, embedded analytics for customers, or AI-driven discovery interfaces.

Cube’s caching and query optimization approach deserves particular attention. The platform supports pre-aggregations—materialized views that automatically compute and maintain common metric combinations. When queries arrive, Cube’s query planner evaluates which pre-aggregations satisfy the query, using cached results when beneficial. Organizations report dramatic cost reductions and performance improvements through strategic pre-aggregation configuration.

The limitation of Cube—being open-source—also represents its strength: organizations accept responsibility for deployment, management, scaling, and operational excellence. While Cube Cloud provides managed hosting, organizations choosing self-hosted deployments must manage infrastructure, scaling, and upgrades themselves.

5. LookML: BI-Native Semantic Layer for Looker Organizations

LookML, Looker’s proprietary semantic modeling language, represents a different category of semantic layer—one embedded within a BI platform rather than existing as standalone infrastructure. Organizations standardized on Looker can build semantic layers directly within their familiar tool using LookML, avoiding the need for separate platforms.

LookML enables analysts to define dimensions, measures, and relationships through a declarative syntax that integrates seamlessly with Looker’s dashboard and visualization capabilities. The elegance of this approach is that semantic definitions and their consumption are tightly integrated—as analysts build dashboards, they reference LookML dimensions and measures, which automatically apply governance and consistency across all uses.

In 2025-2026, Google significantly expanded LookML’s AI capabilities through integration with Gemini, Google’s foundational large language model. Looker’s Agents—AI-powered interfaces to LookML models—enable natural language querying of semantic definitions with high accuracy because the agent understands LookML’s governed business logic rather than attempting to infer meaning from raw tables. Internal Google testing shows that LookML reduces data errors in generative AI natural language queries by as much as two-thirds.

LookML’s advantages are substantial for Looker-standardized organizations. The semantic layer integrates natively with dashboards, access control, and visualization capabilities. Advanced features like time-based dimensions, drill-down hierarchies, and derived tables provide sophisticated modeling capabilities.

The limitation of LookML is vendor lock-in and limited multi-BI support. Organizations with heterogeneous BI tool environments cannot rely on LookML alone to provide universal metric definitions—they must maintain additional semantic layers for non-Looker tools.

6. Databricks Metric Views

Databricks Metric Views, announced in 2025 and reaching general availability in early 2026, represent the lakehouse platform’s native semantic layer offering. Similar to Snowflake Semantic Views, Metric Views provide native semantic modeling within Databricks, enabling organizations standardized on the lakehouse platform to build semantic models without separate infrastructure.

Metric Views separate measure definitions from dimension groupings, allowing organizations to define a metric once and query it across any available dimension at runtime. This separation is technically significant—it prevents the proliferation of separate materialized views for every possible dimension combination, instead letting the query engine dynamically generate correct aggregations regardless of grouping dimensions.

Databricks’ implementation leverages Unity Catalog for governance, enabling row-level security, column-level masking, and audit logging of metric access. Organizations can define metrics through SQL or a visual Catalog Explorer UI, with YAML formats storing the underlying definitions.

The advantage for Databricks-standardized organizations mirrors that of Snowflake Semantic Views—native integration, excellent performance, minimal operational overhead. The limitation similarly restricts portability to the Databricks ecosystem, requiring alternative solutions for organizations with multi-warehouse requirements.

7. Palantir Foundry: Ontology-Driven Enterprise Platform

Palantir Foundry represents a different category within semantic layers—an ontology-driven operational platform where semantic definitions serve not just analytics but operational execution. The platform combines semantic digital twins, kinetic data mapping, and dynamic governance across three integrated layers. Palantir’s approach models the entire enterprise as a system of connected entities, relationships, and operations, not just metrics for reporting.

Foundry’s breadth—providing semantic definitions that simultaneously serve analytics, operational applications, and AI reasoning—represents unique capabilities. Organizations can connect analytics insights directly to operational execution, creating closed-loop systems where insights automatically trigger actions.

The limitation of Palantir Foundry is significant complexity, substantial implementation effort, and pricing structured for large enterprises. Foundry is not a tool organizations adopt quickly or easily—implementations typically require dedicated engagement with Palantir’s professional services, substantial internal expertise development, and multi-month timelines.

8. Denodo: Mature Data Virtualization Leader

Denodo represents the mature end of the data virtualization and semantic layer market, with decades of enterprise deployment experience. The platform provides comprehensive data virtualization capabilities with a business-friendly semantic layer on top, enabling organizations to create unified views across heterogeneous data sources.

Denodo’s strength lies in its enterprise-grade virtualization engine capable of federating queries across virtually any data source—relational databases, NoSQL systems, cloud warehouses, APIs, and unstructured data. The semantic layer sits atop this virtualization infrastructure, providing business users with consistent, governed views of data regardless of underlying technical complexity.

For organizations with significant on-premises infrastructure, complex data landscapes, or requirements for sophisticated data masking and security policies, Denodo provides proven enterprise capabilities. The platform’s maturity means extensive features for governance, security, and operational management.

The tradeoff is that Denodo represents a more traditional enterprise architecture approach, potentially requiring more upfront configuration and expertise compared to newer cloud-native semantic layer platforms. Organizations evaluating Denodo should consider whether its comprehensive capabilities justify the implementation complexity for their specific use cases.

9. Coalesce: Data Transformation with Semantic Context

Coalesce approaches the semantic layer from a data transformation perspective, providing visual interfaces for building data pipelines with semantic context embedded throughout. The platform enables data teams to define transformations visually while automatically maintaining metadata, lineage, and business context.

Coalesce’s semantic capabilities emerge from its transformation-centric approach—as data engineers build pipelines, they simultaneously document business logic, define metrics, and establish governance policies. This integration of transformation and semantics appeals to organizations wanting a unified platform for both data engineering and semantic layer management.

The platform’s ML-based features automate aspects of semantic model creation by analyzing existing transformations and suggesting business-friendly definitions. For organizations with Snowflake as their primary warehouse, Coalesce provides particularly tight integration.

Organizations should evaluate whether Coalesce’s transformation-first approach aligns with their architecture, or whether they need semantic layer capabilities independent of transformation tooling.

10. Promethium: Context-Native Architecture

While traditional semantic layers focus on metric definitions and query federation, Promethium takes a fundamentally different approach through its 360° Context Hub — a layer that aggregates not just semantic definitions but all business and technical context across the enterprise. This context-native architecture powers Mantra™, Promethium’s Data Answer Agent, enabling accurate natural language querying across distributed data sources without requiring data movement or centralization.

The key distinction is architectural. Most semantic layers define metrics in isolation — they translate business logic for a single warehouse or BI tool. Promethium’s Context Hub ingests metadata from data catalogs (Alation, Collibra, Atlan), BI tools (Tableau, Power BI, Looker), and existing semantic layers (dbt, AtScale, Cube) as additional context inputs rather than replacing them. Organizations preserve their current semantic layer investments while gaining a unified, governed intelligence layer that understands context from all systems simultaneously.

This approach addresses a gap the rest of the market largely ignores: business context fragmentation. Enterprise data teams typically maintain semantic definitions in one tool, business glossaries in another, tribal knowledge in documentation (or nowhere), and governance policies in yet another system. Promethium’s Context Hub unifies these into a single context model — combining technical metadata, semantic definitions, business rules, and agentic memory and user feedback from successful queries — so every answer reflects the full picture, not just one slice of it.

From an AI and agent integration perspective, Promethium supports native MCP (Model Context Protocol), enabling any external AI agent to query enterprise data with full context and governance (such as internal data agents or third-party tools like Claude). This goes beyond exposing an API endpoint — the platform ensures agents receive consistently defined business logic, complete lineage, and explainable reasoning for every result. Named a Gartner Cool Vendor in 2024, Promethium is purpose-built for the agent era rather than retrofitting AI capabilities onto traditional infrastructure.

The limitation is scope: Promethium is not a standalone semantic layer in the traditional sense. It doesn’t replace dbt or AtScale for metric definition workflows (albeit organizations can directly define their metrics within Promethium as well) — it sits above them as a context and access layer. Organizations looking for a dedicated metric authoring environment will still need a complementary tool. The platform’s enterprise-scale track record is also still growing compared to more established vendors.

Best for: Organizations that already have semantic layer investments but struggle with fragmented context across tools and teams — particularly those implementing AI agents that need unified, governed access to distributed data with complete business context and explainability.

Semantic Layer Platform Comparison

| Platform | Multi-Source Federation | Business Rule Management | Natural Language Querying | BI Tool Integrations | Agent/API Access | Governance & Lineage | Deployment Model |

|---|---|---|---|---|---|---|---|

| dbt Semantic Layer | ✅ Extensive (10+ warehouses) | ✅ YAML-based, version-controlled | ✅ 83% LLM accuracy | ✅ Tableau, Power BI, Looker, others | ✅ REST, GraphQL, SQL | ✅ Git-based, dbt lineage | ☁️ dbt Cloud (managed) |

| AtScale | ✅ Extensive (any warehouse) | ✅ Visual canvas, reusable models | ✅ MCP support for AI agents | ✅ Universal (any BI tool) | ✅ JDBC, REST, MCP | ✅ Row/column/object-level security | ☁️ Cloud or 🏢 On-premises |

| Snowflake Semantic Views | ⚠️ Snowflake only | ✅ YAML + AI Autopilot | ✅ Cortex Analyst native | ✅ Looker, Sigma, ThoughtSpot | ✅ SQL, REST | ✅ Native catalog integration | ☁️ Snowflake native |

| Cube | ✅ Extensive (any source) | ✅ JavaScript/YAML code-first | ✅ API-first for AI integration | ✅ Universal connections | ✅ REST, GraphQL, SQL, Python | ✅ Code-based lineage | ☁️ Cloud or 🏢 Self-hosted |

| LookML | ⚠️ Limited (Looker sources) | ✅ Declarative modeling | ✅ Gemini integration | ⚠️ Looker-centric | ⚠️ Limited outside Looker | ✅ Native Looker governance | ☁️ Google Cloud |

| Databricks Metric Views | ⚠️ Databricks only | ✅ SQL + visual UI | ✅ Native lakehouse AI | ✅ Growing ecosystem | ✅ SQL, REST | ✅ Unity Catalog integration | ☁️ Databricks native |

| Palantir Foundry | ✅ Ontology-driven federation | ✅ Enterprise ontology modeling | ✅ Operational AI integration | ✅ Custom integrations | ✅ Extensive APIs | ✅ Enterprise-grade governance | ☁️ Cloud or 🏢 On-premises |

| Denodo | ✅ Extensive (any source) | ✅ Enterprise virtualization | ⚠️ Traditional interfaces | ✅ JDBC/ODBC universal | ✅ REST, JDBC, ODBC | ✅ Enterprise governance | ☁️ Cloud or 🏢 On-premises |

| Coalesce | ⚠️ Snowflake-focused | ✅ Visual transformation + semantics | ⚠️ ML-assisted suggestions | ⚠️ Snowflake ecosystem | ⚠️ Limited API access | ✅ Transformation lineage | ☁️ Cloud (Snowflake) |

| Promethium | ✅ Zero-copy access to all data | ✅ Context Hub ingests all semantic layers | ✅ Mantra AI agent, highest accuracy | ✅ Universal (shares to any tool) | ✅ API, agent, workflow integration | ✅ Full lineage + context tracking | ☁️ Hybrid (Control plane + VPC) |

Legend:

✅ Strong capability

⚠️ Limited or specific use case

☁️ Cloud-native

🏢 On-premises or hybrid available

Real-World Impact and Business Outcomes

Organizations implementing semantic layers effectively document substantial measurable business impact. A home improvement retailer operating one of North America’s largest chains faced challenges with inconsistent metrics, outdated OLAP cubes, and limited self-service analytics. After implementing AtScale’s semantic layer, the organization unified business logic, delivered governed metrics directly into Excel and dashboards, and enabled sub-second query performance across terabytes of data.

A financial services organization managing over 20 legacy applications for risk management faced challenges where building a complete risk report required nearly two months of digging across disconnected systems. After implementing a semantic layer with knowledge graph capabilities, the organization could answer questions like “What controls and policies relate to this risk?” in seconds instead of days.

Quantifying the business impact, organizations typically report achieving 3x cost reduction through eliminating duplicate data preparation work, $2.3M annual savings for typical mid-to-large organizations through reduced IT overhead, and 4x faster time-to-insight as stakeholders access consistent, pre-defined metrics instantly.

Open Standards and the Semantic Interchange Initiative

The emergence of the Open Semantic Interchange (OSI) in 2025 represents a pivotal industry development recognizing that semantic consistency requires standardization, not proprietary lock-in. Competitors including dbt Labs, Snowflake, and Salesforce began collaborating to standardize semantic layer definitions in vendor-neutral YAML format. The OSI uses MetricFlow as its declarative specification, enabling organizations to define metrics once and have every tool consume those definitions.

This standardization addresses a fundamental problem: organizations were forced to choose between vendor-specific implementations locked into particular platforms or attempting to maintain consistent definitions manually across multiple tools. OSI enables a third option—vendor-neutral definitions that any platform can consume.

The strategic importance of OSI extends beyond avoiding vendor lock-in. As the agentic web emerges—where AI agents communicate with each other more than with humans—semantic consistency becomes foundational to agent coordination. When agents can discover and reference standardized semantic definitions (through OSI format), they can collaborate reliably without requiring human intermediation.

Implementation Considerations and Selection Guidance

The diversity of semantic layer approaches means organizations must evaluate their specific requirements carefully before selecting platforms. Organizations with mature dbt practices and multi-warehouse strategies benefit most from the dbt Semantic Layer. The warehouse-agnostic approach provides flexibility, the code-first model aligns with dbt’s philosophy, and integration with existing dbt workflows minimizes operational disruption.

Organizations with substantial complexity—hundreds of data sources, multiple clouds, sophisticated dimensional modeling requirements, and federated governance needs—typically benefit from enterprise platforms like AtScale. The investment in additional infrastructure pays dividends through operational efficiency, consistency, and governance rigor.

Organizations standardized on Snowflake should evaluate Semantic Views carefully, particularly with the new Autopilot capabilities that dramatically reduce modeling effort. The native integration, excellent performance, and minimal operational overhead often outweigh the portability limitations for Snowflake-standardized shops. Similar logic applies to Databricks organizations considering Metric Views.

Organizations building analytics applications or embedded analytics for customers often find Cube most compelling. The open-source foundation, strong APIs, pre-aggregation capabilities, and flexible deployment options make Cube particularly suitable for custom implementations.

Organizations prioritizing integration with their existing BI tool ecosystem—especially Looker shops—should evaluate LookML’s sophisticated modeling capabilities and tight Gemini integration. However, organizations with multi-BI tool requirements or planning significant BI platform changes should avoid locking metrics entirely into LookML.

Conclusion: The Semantic Layer as Strategic Infrastructure

By 2026, the semantic layer has evolved from architectural pattern into competitive infrastructure. Organizations that invested early in semantic consistency are pulling ahead of competitors through faster decision-making, more reliable AI systems, and more efficient data operations. The strategic value compounds over time—as semantic definitions accumulate and become business products consumed by more teams and use cases, the leverage increases.

The emerging consensus among data leaders is clear: semantic layers are moving from “best practice” to core infrastructure. As enterprises push generative AI deeper into analytics and decision-making workflows, the need for consistent metrics, governed definitions, and trusted context becomes non-negotiable. Organizations lacking semantic foundations will struggle with unreliable AI, inconsistent reporting, and inability to scale analytics beyond small dedicated teams. Those that invest intentionally in semantic infrastructure will build sustainable competitive advantages through trustworthy, scalable analytics and AI-driven decision-making.

The semantic layer market offers diverse solutions addressing fundamentally the same problem—enabling organizations to treat business meaning as a managed asset rather than accepting fragmentation across tools and teams. What remains constant across all approaches is that organizations choosing any well-designed semantic layer achieve measurable improvements in consistency, efficiency, and decision-making quality. The question no longer is whether semantic layers deliver value—that evidence is overwhelming. The remaining question for data leaders is which approach aligns best with their organizational architecture, governance requirements, and strategic priorities.