5 Signs Your Semantic Layer Is Becoming a Bottleneck (And How to Fix It)

Semantic layers promise to simplify data access by translating technical schemas into business-friendly terms. Yet many implementations achieve the opposite—creating new complexity that slows insights rather than accelerating them. When your semantic layer becomes the bottleneck it was meant to eliminate, users circumvent it, data teams drown in maintenance work, and the investment delivers negative ROI.

This guide identifies five critical warning signs that your semantic layer has degraded into an operational liability. More importantly, it provides specific remediation strategies to restore value—and shows how modern semantic layer architectures address these limitations from the ground up.

What is a context graph and why are they the next evolution of context engineering?

Get your comprehensive guide now.

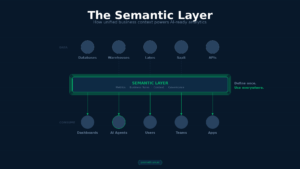

Understanding When Abstraction Becomes Obstruction

A semantic layer abstracts technical complexity by providing a unified business view of enterprise data. When implemented well, it eliminates metric inconsistencies, streamlines governance, and democratizes data access. When implemented poorly, it creates a new layer of fragility that magnifies the problems it was designed to solve.

The fundamental challenge: semantic layer bottlenecks often emerge gradually. A system that works adequately with ten users and fifty metrics fails catastrophically when scaled to hundreds of users and thousands of metrics. Performance degrades as data volumes grow, yet the underlying issues remain invisible until users abandon the system entirely.

This gradual failure creates a dangerous dynamic. Organizations continue investing in a broken system without recognizing the root causes of degradation. Meanwhile, users lose confidence and revert to shadow analytics—recreating the exact fragmentation the semantic layer was meant to eliminate. According to industry data, 57% of organizations using vendor-native semantic layer implementations are already considering platform changes, signaling widespread dissatisfaction.

Sign One: Query Performance Degradation

The most immediately observable symptom of semantic layer failure is unacceptable query performance. When users wait 10-30 seconds for standard metrics queries, or when dashboards timeout during peak usage, the semantic layer has become an operational hindrance rather than an enabler.

Performance degradation manifests through multiple patterns. Without intelligent caching, every query recalculates results from raw data, driving up both response times and compute costs. Organizations report paying substantially more for warehouse resources while delivering a frustrating user experience that drives adoption down.

Measure performance systematically: track P50, P95, and P99 response times for representative queries. Well-optimized semantic layers should achieve sub-second response times for approximately 90% of standard metrics queries. When P95 latencies exceed three seconds for previously fast queries, or when month-over-month latencies increase by 50% or more, the system has entered critical territory.

The performance problem becomes acute under concurrent load. A semantic layer may function adequately for a small pilot team but fail when rolled out organization-wide. Real-world examples illustrate this clearly: one global retailer achieved sub-second query response times for more than 80% of queries even as AI copilots and dashboards hit the same warehouse concurrently. In contrast, organizations without proper semantic layer optimization report query timeouts and errors that make the system unreliable for operational use cases.

Performance degradation also appears in cost indicators. Without intelligent query optimization, organizations see 10-fold spikes in query volume when AI agents are deployed, yet cost per query increases rather than decreases. This pattern indicates the semantic layer is not optimizing queries or reusing cached results effectively.

Modern Solution: Federated Query Pushdown

Architectures that push query execution to underlying data platforms eliminate centralized processing bottlenecks. Rather than pulling data into a semantic layer for processing, federated approaches execute operations where data lives—leveraging the full optimization capabilities of platforms like Snowflake, Databricks, or cloud warehouses. This approach delivers better performance while reducing infrastructure costs, since compute happens on already-optimized systems rather than through an additional processing layer.

Sign Two: Coverage Gaps Creating Modeling Gridlock

A semantic layer should expand data accessibility, not constrain it. Yet organizations frequently report that only 10-30% of frequently requested metrics have been formally defined after 6-12 months of implementation effort. This low coverage rate creates a paradox: the semantic layer increases load on data teams rather than reducing it.

Coverage gaps manifest in measurable ways. First, track the percentage of critical business metrics formally defined in your semantic layer versus those accessed through direct queries or custom SQL. When more than 30% of critical metrics exist outside the semantic layer, users view it as insufficiently comprehensive.

Second, measure time-to-production for new metric definitions. Organizations with functioning semantic layers deploy new metrics within 1-4 weeks. When adding metrics requires formal change requests, architectural review, and multi-team approval cycles stretching into months, the semantic layer has become a bottleneck rather than an accelerator.

Third, assess what percentage of ad-hoc requests are handled outside the semantic layer. When data teams report that 50-70% of analytical requests require custom SQL rather than semantic layer definitions, massive coverage gaps exist. These ad-hoc requests divert engineering time from strategic work and create the exact technical debt the semantic layer was meant to eliminate.

The coverage problem becomes particularly acute for exploratory analytics. Semantic layers are typically optimized for known, predefined queries—dashboards and standard reports. They often struggle to support ad-hoc analysis where users combine dimensions and metrics in novel ways not anticipated during semantic model design.

Modern Solution: Zero-Copy Access

Rather than requiring all data to be pre-modeled before access, zero-copy architectures provide instant access to any connected data source. Users can explore and prototype with raw data, then promote successful patterns into governed semantic definitions. This approach eliminates “wait for modeling” delays while still providing governance where it matters—on production metrics rather than exploratory queries.

Sign Three: Maintenance Overhead Exceeding Value

When maintaining a semantic layer consumes 40-60% of data team time rather than 10-20%, the system has failed its core value proposition of consolidating redundant logic. This maintenance burden manifests through multiple symptoms.

First, track the percentage of data team time spent maintaining the semantic layer versus developing new analytics capabilities. High maintenance overhead indicates the semantic layer has introduced unnecessary complexity rather than reducing it.

Second, measure the complexity of making changes. When a simple metric definition change requires coordination across multiple teams, formal review processes, testing cycles, and deployment procedures, governance overhead has become excessive. Track the number of change approval cycles required and compare this to your organization’s tolerance for process overhead.

Third, assess integration friction between the semantic layer and existing data workflows. If the semantic layer requires separate tooling, different deployment processes, or specialized skills separate from core data stack tools, it becomes an operational burden. When teams report the semantic layer feels disconnected from their primary workflow rather than integrated into it, architectural misalignment exists.

Data freshness issues compound maintenance overhead. If metrics become stale or delays exist in reflecting new data, users lose confidence in system reliability. This is particularly problematic for operational use cases requiring near real-time data.

Modern Solution: Agentic Memory

Rather than requiring manual updates to semantic definitions, systems with agentic memory learn context from actual usage patterns. When users repeatedly query similar data or ask similar questions, the system captures this context automatically—reducing the burden of maintaining comprehensive documentation while ensuring definitions reflect actual business needs. This approach transforms maintenance from a manual, continuous effort into an automated learning process.

Sign Four: Inflexibility Constraining Innovation

Semantic layers typically excel at serving predefined queries through BI tools but struggle with ad-hoc questions that don’t fit pre-modeled schemas. When users can only ask questions anticipated during semantic model design, the semantic layer constrains analytical innovation rather than enabling it.

This inflexibility manifests in specific patterns. First, examine what percentage of data exploration happens outside the semantic layer. When analysts and data scientists consistently bypass the semantic layer for exploratory work, it signals the system cannot support flexible investigation.

Second, assess how well the semantic layer supports emerging use cases like AI agents and natural language queries. Many semantic layers were designed for human analysts working through BI tools—not for AI systems that need to dynamically construct queries based on conversational context. When organizations deploy AI agents, they often discover their semantic layer cannot provide the dynamic query capabilities required.

Third, measure how quickly the semantic layer adapts to changing business requirements. In fast-moving organizations, business questions evolve rapidly. When the semantic layer requires lengthy update cycles to support new analytical patterns, it becomes a constraint on business agility.

Modern Solution: Natural Language Interface

Interfaces that translate natural language questions directly into queries eliminate the need for schema changes when business questions evolve. Rather than requiring analysts to understand technical schemas or wait for new metrics to be modeled, users ask questions in plain English. The system interprets intent, constructs appropriate queries, and delivers results—handling ad-hoc questions without requiring schema modifications. This approach provides flexibility without sacrificing governance, since policies are applied at query execution time regardless of how questions are phrased.

Sign Five: Governance Overhead Slowing Execution

Governance is essential, but when governance processes make data access slower and more difficult, users circumvent the system entirely. The goal is not governance versus speed—it’s governance that enables rather than obstructs.

Governance overhead appears in several forms. First, measure time-to-access for new users or use cases. When provisioning data access requires weeks of approval workflows, users find workarounds. Organizations with effective governance provision access within days while maintaining appropriate controls.

Second, assess whether governance rules are consistently applied. When different data sources or tools have different governance implementations, gaps and inconsistencies emerge. Users discover some data sources enforce strict controls while others allow unrestricted access—creating both security risks and user confusion.

Third, examine whether governance visibility exists at the right level. Some semantic layers apply governance only at data source access level—not at query or metric level. This creates a choice between granting broad access (risking inappropriate usage) or restricting access entirely (blocking legitimate use cases).

Modern Solution: Context-Aware Query Planning

Rather than forcing users through pre-approval workflows, context-aware systems apply governance rules automatically at query execution time. The system understands data sensitivity, user permissions, and query intent—then applies appropriate masking, filtering, or access controls without manual intervention. This approach maintains security and compliance without creating process bottlenecks, since governance enforcement happens transparently as part of query execution rather than as a separate approval workflow.

Measuring Bottleneck Severity

Once potential bottleneck symptoms are recognized, systematic measurement quantifies severity and prioritizes remediation efforts. Establish baseline metrics and track them continuously.

For performance, measure query response times at P50, P95, and P99 percentiles for representative query types. Benchmark against performance targets: interactive dashboards require P95 latency under one second, operational analytics under three seconds. Track month-over-month trends to identify progressive degradation.

For coverage, catalog what percentage of enterprise data and metrics have been formalized within the semantic layer. Create a prioritization matrix ranking metrics by business criticality and usage frequency. Most organizations should target 60-80% coverage of critical business metrics within 12 months. Coverage below 40% after 12 months indicates inadequate scope or insufficient resources.

For maintenance, conduct time audits tracking data team effort on semantic layer maintenance versus new capability development. Well-designed semantic layers typically consume 15-25% of effort for maintenance, with the remainder focused on new capabilities. When maintenance exceeds 50% of effort, the semantic layer has become an operational liability.

For flexibility, measure what percentage of ad-hoc analytical work happens outside the semantic layer. High bypass rates indicate insufficient flexibility for exploratory analysis.

For governance, measure average time-to-access for new users or use cases. Track the percentage of access requests that require manual approval versus automated policy enforcement. High manual overhead indicates governance processes need modernization.

Root Cause Analysis: Semantic Layer Versus Data Source Issues

Before implementing remediation strategies, correctly diagnose whether problems originate within the semantic layer or underlying data sources. This distinction prevents wasted effort focused on the wrong layer.

To determine performance problem origins, compare query performance through the semantic layer against equivalent queries executed directly against the warehouse. If a query takes 15 seconds through the semantic layer but 3 seconds directly against the warehouse, the semantic layer introduces 12 seconds of overhead. If both take approximately 15 seconds, the underlying data source is the bottleneck.

Further diagnose by examining query execution traces and logs. Determine whether overhead comes from query translation, joining tables across sources, insufficient caching, or retrieving excessive data volumes. Many semantic layers provide execution traces showing how queries are rewritten and which optimizations are applied.

To determine metric inconsistency origins, select a specific metric and trace its calculation from source tables through the semantic layer to final output. Compare outputs across different tools and interfaces. If the semantic layer produces consistent output but different BI tools display different results, the inconsistency originates in tool interpretation. If the semantic layer itself produces inconsistent results, metric definitions are ambiguous or incorrectly implemented.

Remediation Strategies

Once bottlenecks are accurately diagnosed, implement targeted remediation strategies aligned to specific problems.

For Performance Degradation:

Implement intelligent caching that stores frequently accessed metrics rather than forcing recalculation. Modern semantic layers support result caching for frequent queries, materialized views for complex aggregations, and aggregate awareness allowing optimizers to route queries to pre-aggregated data.

Review underlying data models with warehouse teams. Verify table joins are efficient, appropriate indexes exist on join columns, and schema design minimizes computational overhead.

Implement autonomous query optimization using machine learning to analyze query patterns and automatically build aggregates tailored to actual workload characteristics. Rather than manually tuning queries, autonomous optimization systems adapt automatically as patterns change.

For Coverage Gaps:

Prioritize which metrics to formalize based on business impact and usage frequency rather than attempting comprehensive coverage immediately. Start with 10-15 high-value metrics in executive dashboards, regulatory reports, or operational decisions. Once this initial set achieves adoption, incrementally expand based on measured usage patterns.

Implement mechanisms allowing ad-hoc analysis without requiring formal metric definitions. Hybrid approaches combine strict governance for critical metrics with flexible exploratory capabilities for non-standard queries, preventing the semantic layer from constraining analytical innovation.

For Maintenance Overhead:

Ensure the semantic layer integrates with existing data development workflows rather than being a parallel system. If the semantic layer uses the same development language, version control, and deployment pipeline as the rest of the data stack, this reduces context switching and specialized skills creating maintenance burden.

Implement comprehensive documentation and automated testing. Create documentation explaining what each metric represents, how it’s calculated, underlying assumptions, and usage instructions. Build automated tests verifying metric calculations produce expected results and schema changes don’t break definitions.

For Inflexibility:

Deploy natural language interfaces allowing users to ask questions without understanding technical schemas. Rather than requiring schema modifications for every new question type, the system interprets intent and constructs appropriate queries dynamically.

Implement flexible access patterns supporting both structured BI workflows and exploratory data science work. The semantic layer should serve predefined dashboards efficiently while also supporting dynamic, ad-hoc investigation.

For Governance Overhead:

Implement automated policy enforcement at query execution time rather than through pre-approval workflows. Context-aware systems understand data sensitivity, user permissions, and query intent—then apply appropriate controls transparently without manual intervention.

Establish clear ownership models and change management processes, but ensure these enable rather than obstruct access. Document governance policies explicitly and automate enforcement wherever possible.

Preventive Measures for Long-Term Sustainability

Rather than waiting for bottlenecks to emerge, build preventive practices into semantic layer operations from inception.

Establish strong governance frameworks early, including clear ownership models, change management processes, and security protocols. Assign explicit ownership for different semantic model domains with clear escalation paths for changes and conflicts.

Build monitoring and observability into operations from the beginning. Regular performance monitoring should be automated, including tracking query performance metrics, monitoring cache effectiveness, and establishing clear escalation procedures for performance issues. Set up automated alerts when metrics move into problematic ranges.

Implement structured onboarding and enablement programs helping new team members and user groups understand the semantic layer’s value and usage. High-quality documentation and responsive support dramatically improve adoption and reduce pressure on data teams.

Plan for semantic layer evolution and versioning. Recognize that business requirements change, data sources evolve, and tools improve. Design with sufficient modularity and flexibility to adapt without requiring complete rearchitecture. Use open standards for semantic definitions when possible to avoid vendor lock-in.

Conclusion

Semantic layers promise to democratize data access while maintaining governance and consistency. Yet poorly implemented systems become the exact bottlenecks they were meant to eliminate—slowing insights, frustrating users, and wasting engineering resources.

The five critical symptoms—query performance degradation, coverage gaps, maintenance overhead, inflexibility, and governance bottlenecks—provide diagnostic criteria for assessing semantic layer health. By systematically measuring these indicators and implementing targeted remediation, organizations can transform failing semantic layers back into valuable assets.

More fundamentally, modern semantic layer architectures address these limitations from the ground up. Federated query pushdown eliminates centralized processing bottlenecks. Zero-copy access removes modeling delays. Agentic memory reduces maintenance burden. Natural language interfaces provide flexibility without sacrificing governance. Context-aware query planning applies rules without slowing execution.

Promethium’s AI Insights Fabric represents a modern architecture that addresses all five bottleneck patterns simultaneously—combining federated query pushdown, zero-copy access, agentic memory, natural language interfaces, and context-aware governance in a single platform. By integrating these capabilities from the ground up rather than layering them onto legacy architectures, it’s designed to prevent semantic layer bottlenecks before they emerge.

The distinction between a functioning semantic layer and a failing one comes down to execution and ongoing management. Organizations that treat semantic layers as strategic infrastructure—establishing clear governance, maintaining comprehensive documentation, continuously monitoring performance—achieve genuine productivity gains. Those that implement without adequate investment often find themselves worse off than before, with fragmented logic, frustrated users, and wasted effort.

By recognizing bottleneck symptoms early and implementing appropriate remediation strategies, data teams can ensure their semantic layers deliver on their promise: democratizing data access while maintaining trust, governance, and performance at enterprise scale.