Top 10 Data Fabric Tools in 2026: Features, Pricing, and Comparison

The data fabric market has transformed from niche offering to essential infrastructure as enterprises grapple with distributed data environments spanning multiple clouds, on-premises systems, and SaaS applications. The global data fabric market reached $3.18 billion in 2025 and is projected to hit $4.55 billion by 2026, growing at 21.81% annually. This explosive growth reflects a fundamental shift: traditional centralized warehouses and point-to-point integrations can no longer handle the complexity of modern enterprise data architectures.

Organizations now face a critical choice among diverse architectural approaches—virtualization-first platforms querying data in place, integration-led solutions moving data through governed pipelines, and unified platforms consolidating multiple services. Each approach offers legitimate advantages for specific contexts, but selecting the wrong platform can lock enterprises into months-long implementations that fail to deliver promised value.

This analysis examines the ten leading data fabric platforms available in 2026, evaluating their core capabilities, pricing structures, and differentiation strategies. We’ll cut through marketing claims to reveal which solutions truly enable self-service analytics, how they handle data governance at scale, and what organizations should expect in terms of deployment timelines and total cost of ownership.

Understanding Data Fabric Evolution in 2026

The data fabric concept has matured significantly over the past eighteen months, driven by converging industry forces. The rise of agentic AI has reshaped data fabric requirements. As enterprises move beyond experimental chatbots to autonomous agents executing business processes independently, the need for high-quality, consistently governed data has become acute. Organizations report LLM accuracy increases up to 300% when integrated with proper governance versus raw tables, making governance infrastructure critical for enterprise AI rather than a compliance checkbox.

Market Leadership and Platform Categories

The 2026 data fabric market comprises distinct solution categories addressing different organizational philosophies. Five enterprise data platforms dominate market share: Snowflake at 35%, Google BigQuery at 28%, Amazon Redshift at 20%, Microsoft Fabric at 12%, and Databricks at 5%. However, this concentration among warehouse-oriented platforms obscures a more nuanced competitive landscape.

Three architectural approaches have emerged. Virtualization-first data fabrics provide fast access without physical data movement, querying multiple systems through a unified layer. Denodo leads this segment, particularly for logical data fabric architectures. Integration-led data fabrics like Talend (now part of Qlik) emphasize traditional ETL enhanced with governance and modern delivery methods. Platform-centric data fabrics—Microsoft Fabric, Databricks—integrate storage, compute, transformation, and analytics into cohesive environments.

Top 10 Data Fabric Platforms

1. Microsoft Fabric

Microsoft Fabric is a unified analytics platform that consolidates data engineering, warehousing, real-time intelligence, and Power BI into a single SaaS experience on Azure. OneLake — its centralized data lake — stores one copy of data accessible across all workloads.

Does well: Deep integration across the Microsoft ecosystem means organizations already running Power BI, Azure, and M365 get a near-seamless experience. Copilot and Data Agents bring natural language querying to every tier. The platform moves fast — Microsoft ships features at a pace few competitors match.

Limitations: Many capabilities are still in preview, and documented multi-hour outages in 2025 raised reliability concerns. The Capacity Unit pricing model is opaque — when CUs are exhausted, all workloads throttle simultaneously. Adopting Fabric means committing fully to Azure; there’s no multi-cloud story here.

Best for: Organizations already deep in the Microsoft stack who want a single platform for analytics and AI — and are willing to tolerate a fast-moving product that’s still maturing.

2. Databricks

Databricks pioneered the lakehouse architecture — combining data lake flexibility with warehouse-grade governance and performance. Built on Apache Spark and Delta Lake, the platform has evolved into what Databricks calls an “Intelligence Platform” spanning analytics, ML, and AI agents.

Does well: Unmatched breadth for technical teams — from batch analytics and real-time streaming to ML model training, AI agents, and now OLTP via the Lakebase database. True multi-cloud support across AWS, Azure, and GCP. Open standards commitment (Delta Lake, Iceberg, MLflow) gives organizations real portability. Unity Catalog provides centralized governance with fine-grained access control and lineage.

Limitations: Complexity is the core challenge. DBU-based pricing is unpredictable — total cost includes Databricks fees plus underlying cloud infrastructure. The platform is overkill for basic reporting or dashboard-centric use cases. BI capabilities, while improving, remain less mature than Power BI or Tableau. Without specialized engineering talent, costs spiral and the platform is underutilized.

Best for: Data-intensive organizations with complex engineering, ML, and AI workloads at scale — particularly those with strong technical teams who need multi-cloud flexibility and open standards.

3. Denodo

Denodo is the market’s leading data virtualization platform, built on the philosophy that data should stay where it is. Rather than moving or copying data, it creates a unified virtual access layer across cloud, on-prem, and SaaS sources — what Denodo calls a “logical data fabric.”

Does well: Fastest time-to-value of any enterprise-grade data fabric — most deployments deliver results within 4–8 weeks. Strong federated query performance through intelligent caching and pushdown optimization. The semantic layer ensures consistent business definitions across all virtualized sources.

Limitations: Performance is only as good as the slowest underlying source, and stacking virtualization layers without caching creates real bottlenecks. Pricing is CPU-core-based and scales steeply — prohibitive for smaller organizations. It’s an access and integration layer, not a full data management platform — no native ETL, data quality, or MDM.

Best for: Mid-to-large enterprises with complex hybrid environments who want real-time federated access without data movement — particularly in financial services and healthcare.

4. Informatica IDMC

Informatica Intelligent Data Management Cloud is the industry’s most comprehensive metadata-driven data management platform, spanning integration, quality, governance, catalog, MDM, and privacy. Now a Salesforce subsidiary following an $8 billion acquisition completed in November 2025, Informatica operates as “Informatica from Salesforce” and serves roughly 5,000 customers across 100+ countries.

Does well: Broadest capability set of any platform on this list — ETL/ELT, data quality, governance, catalog, MDM, iPaaS, and API management under one umbrella. The CLAIRE AI engine provides metadata-driven automation with expanding agentic capabilities. Twenty consecutive years as a Gartner Leader in Data Integration speaks to proven enterprise scale.

Limitations: The breadth comes at a cost — literally. IPU-based consumption pricing is notoriously opaque and difficult to predict. The platform can feel complex to implement and operate, with customer satisfaction lagging behind peers in independent surveys. The Salesforce acquisition introduces new vendor lock-in concerns for non-Salesforce customers, and many newer AI capabilities remain in preview.

Best for: Large enterprises with complex, heterogeneous data landscapes needing comprehensive integration, quality, governance, and MDM under one platform — especially those in the Salesforce ecosystem.

5. Starburst

Starburst is the leading commercial distribution of Trino, the open-source distributed SQL engine originally developed at Meta. The platform treats the entire distributed enterprise as a single SQL database — querying data across 50+ source types simultaneously without movement or duplication.

Does well: Exceptional federated query performance across distributed data sources with true open-source foundations. Strong in data lakehouse environments where teams query Iceberg or Delta Lake tables directly. No vendor lock-in — Trino’s open architecture preserves flexibility. Deep traction in financial services, with nine of the top fifteen US banks as customers.

Limitations: The core value proposition — federated SQL — is narrower than full-stack competitors. Most AI agent features announced in 2025 remain in preview rather than GA. As a business, Starburst has seen valuation compression and headcount reduction since its 2022 peak, raising questions about long-term trajectory. Not a data management platform — no ETL, quality, or governance capabilities.

Best for: Security-conscious enterprises with highly distributed data across hybrid and multi-cloud environments who want open standards and federated query without data migration — particularly financial services.

6. IBM Cloud Pak for Data

IBM’s data fabric story spans two interlocking products: Cloud Pak for Data for data integration, governance, and observability, and watsonx for AI and lakehouse workloads. The platforms can run on a single Red Hat OpenShift cluster but remain distinct products — not yet fully merged.

Does well: Unmatched enterprise breadth for organizations with IBM infrastructure — mainframes, Db2, and legacy systems get first-class integration. Strong governance capabilities across hybrid and multi-cloud environments. The watsonx.data lakehouse runs on Apache Iceberg with Presto and Spark, bringing IBM’s governance strength to modern open formats.

Limitations: Product sprawl creates real confusion — Cloud Pak for Data, watsonx, watsonx.ai, watsonx.data, watsonx.governance, and watsonx BI are all separate offerings with overlapping positioning. User experience feedback consistently cites poor UI design and disorganized documentation. Deployment requires Red Hat OpenShift with substantial hardware minimums, limiting accessibility.

Best for: Large enterprises already invested in the IBM ecosystem — especially regulated industries running mainframes, Db2, and OpenShift that need robust governance and compliance across hybrid cloud.

7. Qlik Talend

Qlik acquired Talend in 2023, combining Qlik’s analytics and data integration with Talend’s data quality, transformation, and governance capabilities. Nearly three years later, the product integration is still in progress — the flagship combined offering, Qlik Talend Cloud, coexists with legacy products from both sides.

Does well: End-to-end data capabilities from CDC and real-time ingestion through ETL/ELT, data quality, governance, and analytics. Strong data quality engine with built-in profiling, cleansing, and validation. The new Qlik Open Lakehouse offers managed Apache Iceberg with competitive performance.

Limitations: Multiple overlapping products from years of acquisitions create genuine buyer confusion about which tool to use. Capacity-based pricing with data volume and execution metrics is difficult to predict. Private equity ownership (Thoma Bravo) raises concerns about pricing trajectories and long-term product strategy. The integration roadmap between Qlik and Talend products remains incomplete.

Best for: Enterprises seeking end-to-end data capabilities from ingestion through analytics — particularly those already using Qlik for BI who want to consolidate their data quality and integration tooling.

8. Google BigQuery

BigQuery is Google Cloud’s serverless data warehouse, designed for zero infrastructure management and massive-scale analytics. It processes queries across Google’s distributed infrastructure without requiring users to provision clusters or manage capacity, and has expanded significantly into AI-native analytics.

Does well: True serverless simplicity — no clusters to manage, no infrastructure to tune. Gemini integration brings AI agents for data engineering, data science, and conversational analytics directly into the SQL workflow. BigQuery Omni provides genuine multi-cloud querying across AWS and Azure data without movement. BigLake unifies warehouses and lakes across open formats.

Limitations: Cost unpredictability is the primary concern — on-demand pricing can produce bill shock on large queries, and Google added a default daily data limit as a safety measure. Multi-cloud feature parity is incomplete: several capabilities including BigQuery ML and JavaScript UDFs don’t work in Omni regions. Being fully serverless means less ability to fine-tune performance for specific workloads.

Best for: Organizations on GCP or pursuing multi-cloud analytics who want zero infrastructure management — especially AI/ML-heavy teams leveraging Gemini integration for large-scale ad-hoc analysis.

9. Amazon Redshift

Amazon Redshift pioneered cloud data warehousing and remains the analytics backbone for AWS-centric organizations. The platform offers both provisioned clusters and a serverless tier, with columnar storage optimized for analytical queries and deep integration across the AWS ecosystem.

Does well: Seamless integration with S3, Lambda, SageMaker, Bedrock, and other AWS services creates a cohesive analytics environment. Zero-ETL integrations with Aurora, DynamoDB, and Kinesis enable near real-time analytics without pipeline construction. Apache Iceberg write support (GA late 2025) brings full open lakehouse participation. Amazon Q and Bedrock integration add natural language querying and LLM invocation via SQL.

Limitations: AWS lock-in is absolute — no multi-cloud capability exists. Provisioned clusters still require understanding distribution keys, sort keys, and workload management queues. Default concurrency limits are lower than competitors. The interface and developer tooling feel dated compared to Snowflake or BigQuery.

Best for: Organizations deeply invested in the AWS ecosystem wanting tight service integration — particularly those leveraging zero-ETL from Aurora or DynamoDB for near real-time analytics.

10. Promethium

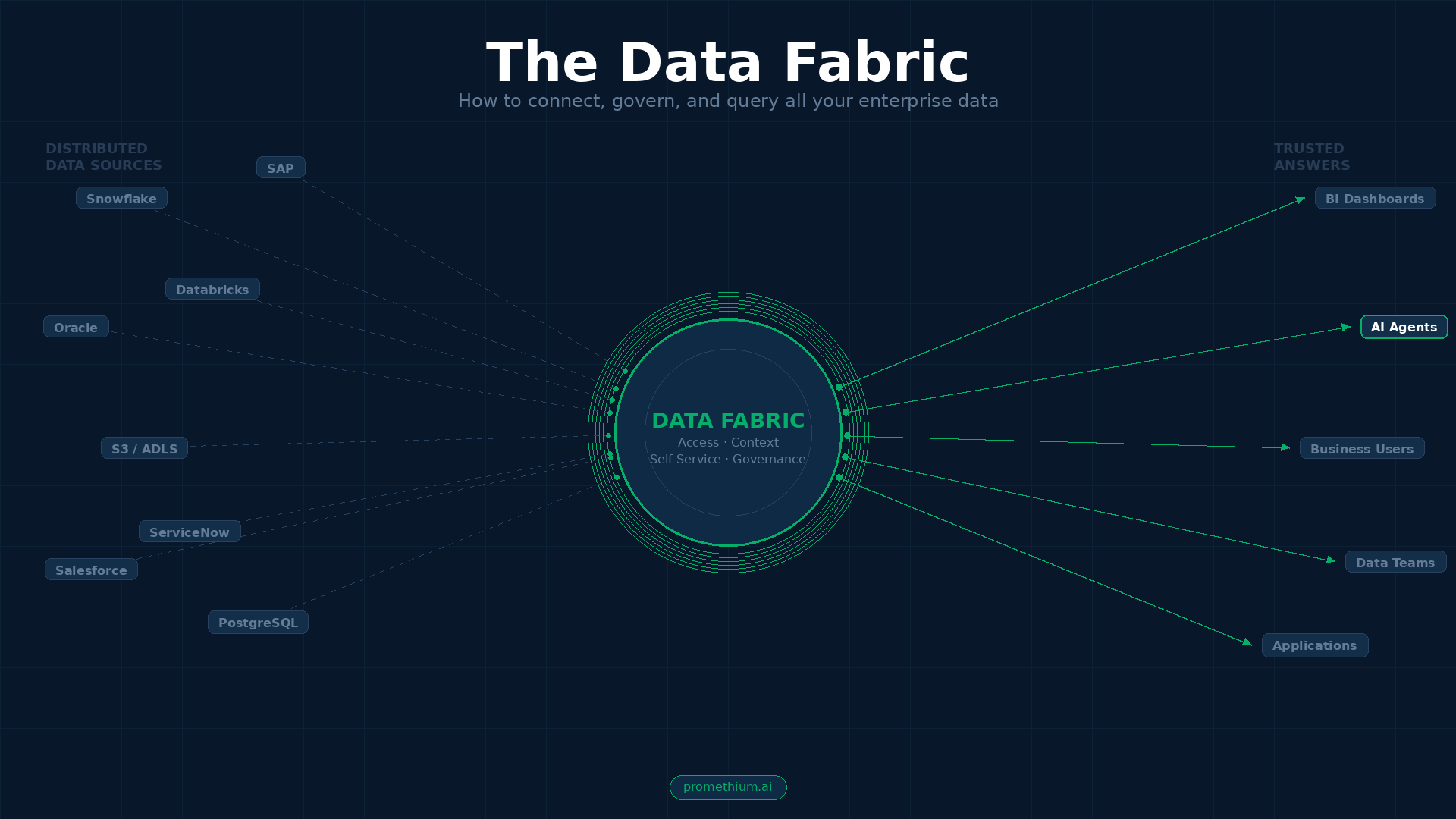

Promethium takes a fundamentally different approach from both platform-centric and virtualization-first solutions. As an AI Insights Fabric, it’s purpose-built for the agent era — enabling organizations to talk to all their data instantly through natural language, connecting users, agents, and tools to accurate, explainable answers across distributed enterprise data.

The architecture delivers three tightly integrated layers: a Universal Query Engine for zero-copy federated access across cloud, SaaS, and on-prem systems; a 360° Context Hub that unifies technical metadata, semantic definitions, and business rules for trusted accuracy; and the Mantra™ Data Answer Agent for conversational self-service with complete explainability and lineage. Native MCP and A2A support means any AI agent can query enterprise data with full context and governance — a capability no other platform on this list delivers natively.

Does well: Deploys in weeks, not months — no data migration or re-architecture required. The unified context layer combines metadata from catalogs, BI tools, and semantic layers in a way competitors simply don’t. Supports queries and questions across distributed data. Every answer is explainable with complete lineage, which matters enormously for regulated industries and AI governance. Named a Gartner Cool Vendor in 2024 for its innovative approach.

Limitations: As a Series A startup, Promethium doesn’t offer the breadth of a full data management platform — no native ETL, CDC, or MDM. Enterprise-scale track record is still growing compared to established vendors.

Best for: Organizations implementing AI agents that need consistent business logic and instant access to distributed data — without copying, moving, or centralizing it first. Particularly strong for enterprises that want to make their existing data stack AI-ready without ripping and replacing.

Platform Comparison Summary

| Platform | Zero-Copy Federation | Unified Context Layer | Conversational Self-Service | Agent Integration (MCP/A2A) | Explainability & Lineage | Open Architecture | Time to Value |

|---|---|---|---|---|---|---|---|

| Microsoft Fabric | ❌ | Limited | ✅ (Copilot) | ❌ | ✅ (Purview) | ❌ (Microsoft ecosystem) | 3-6 months |

| Databricks | ❌ | Limited | Partial | ❌ | ✅ (Unity Catalog) | ✅ (Open-source) | 3-6 months |

| Denodo | ✅ | ✅ (Semantic) | ❌ | ❌ | ✅ | ✅ | 4-8 weeks |

| Informatica IDMC | ❌ | ✅ (Knowledge Graph) | Limited | ❌ | ✅ (CLAIRE AI) | Partial | 6-12 months |

| Starburst | ✅ | Limited | ❌ | ❌ | ✅ | ✅ (Trino-based) | 2-4 months |

| IBM Cloud Pak | Partial | Limited | Limited (Watson) | ❌ | ✅ | Partial | 6-12 months |

| Talend | ❌ | Limited | ❌ | ❌ | ✅ | Partial | 6-18 months |

| Google BigQuery | ❌ | ❌ | Limited | ❌ | Partial | ❌ (Google ecosystem) | 1-3 months |

| Amazon Redshift | ❌ | ❌ | Limited | ❌ | Partial | ❌ (AWS ecosystem) | 2-4 months |

| Promethium | ✅ (Trino + custom connectors) | ✅ (360° Context Hub) | ✅ (Mantra™) | ✅ (Native MCP/A2A) | ✅ (Complete) | ✅ (all data, all context, all users) | 2-4 weeks |

How to choose: what actually matters

Selecting the right data fabric depends less on which platform is “best” and more on three practical questions.

Where does your data live? If it’s concentrated in one cloud ecosystem, the native platform (Fabric, BigQuery, Redshift) will deliver the smoothest experience. If it’s distributed across multiple clouds, on-prem systems, and SaaS applications, virtualization-first and federation-first approaches (Denodo, Starburst, Promethium) avoid the pain of centralization.

What’s your primary use case? Comprehensive data management and governance points toward Informatica or Qlik Talend. AI/ML workloads at scale favor Databricks. Self-service analytics for business users favor platforms with strong natural language interfaces. AI agent enablement — giving autonomous agents governed access to enterprise data — is where Promethium’s architecture uniquely shines.

How fast do you need results? If the board wants visible progress this quarter, virtualization-first platforms deploying in weeks outperform integration-led approaches requiring months of pipeline construction. If you’re building for the long term with dedicated engineering resources, platform-centric solutions deliver stronger performance economics over time.

The most successful organizations recognize that data fabric implementation is an organizational journey — not just a technology purchase. Platform choice matters, but execution excellence — clear governance frameworks, user training, and iterative improvement — ultimately determines whether any of these tools deliver on their promise.