82% of enterprises struggle with data silos, and 68% of enterprise data remains unanalyzed—not because the data doesn’t exist, but because the right tools don’t connect business users to it effectively. Organizations invest millions in data warehouses, lakes, and pipelines, then watch adoption fail because business users can’t navigate technical schemas or wait weeks for IT to fulfill basic requests.

Data democratization tools promise to bridge this gap—making data accessible, understandable, and actionable for everyone. But the platform landscape is complex: self-service BI tools, semantic layers, data catalogs, integration platforms, and comprehensive end-to-end solutions all claim to enable democratization. Which tools do you actually need? How do platforms like Promethium, AtScale, Tableau, and Domo differ? What works for distributed data versus centralized warehouses?

Here’s a comprehensive evaluation framework covering tool categories, detailed vendor comparisons, selection criteria, and implementation best practices that determine whether democratization succeeds or fails.

Understanding the Tool Categories

Complete data democratization requires multiple complementary tool types working together—not a single platform solving everything.

Self-Service Analytics and BI Platforms

Self-service analytics tools empower business users to explore data, create visualizations, and derive insights without requiring SQL expertise or constant IT support. These platforms provide the front-end interfaces through which business users interact with democratized data.

Core capabilities include:

- Visual query builders that let users select dimensions, measures, and filters through point-and-click interactions

- Interactive dashboards with drill-down, filtering, and real-time data exploration

- Automated insights using AI to surface anomalies, trends, and significant patterns

- Collaboration features enabling teams to share analyses and build on each other’s work

- Mobile access for decision-making anywhere

- Embedded analytics for integrating insights into operational applications

Tableau: Visual Analytics Leader

Tableau has long been the gold standard for rich, interactive visualizations and flexible exploratory analysis. It’s the choice for organizations prioritizing visual storytelling and ad-hoc investigation over standardized reporting.

Key Capabilities:

Advanced Visualization: Extensive chart types, custom visualizations, and sophisticated formatting options. Drag-and-drop interface makes complex visualizations accessible to non-technical users.

Exploratory Analysis: Strong support for ad-hoc exploration, hypothesis testing, and iterative investigation. Users can follow analytical threads naturally without rigid dashboard constraints.

Data Connectivity: Native connectors for 70+ data sources including cloud warehouses, databases, files, and SaaS applications. Live connections or extracts depending on performance needs.

Collaboration: Tableau Server and Tableau Cloud enable sharing dashboards, subscribing to updates, and commenting on visualizations.

Strengths: Best-in-class visualization capabilities, strong user community, flexible exploration, powerful calculated fields.

Considerations: Higher learning curve than simpler tools, premium pricing, requires some training for advanced features.

Ideal For: Organizations prioritizing visual storytelling, data analysts comfortable with sophisticated tools, companies needing flexible ad-hoc analysis.

Microsoft Power BI: Enterprise Integration Platform

Microsoft Power BI delivers enterprise-grade integration with the Microsoft ecosystem at accessible pricing. Organizations already invested in Microsoft 365, Azure, and Dynamics benefit from native integration and familiar interfaces.

Key Capabilities:

Microsoft Ecosystem Integration: Seamless integration with Excel, Teams, SharePoint, Azure, and Dynamics 365. Users work within familiar Microsoft interfaces reducing learning curves.

Power Query: Robust data transformation engine enabling complex ETL operations within the BI tool itself. M language provides advanced users powerful manipulation capabilities.

AI Features: Quick insights, key influencers analysis, decomposition trees, and Q&A natural language queries. AI-powered anomaly detection highlights significant patterns.

Scalability: Power BI Premium provides dedicated capacity for enterprise deployments with large user bases and high query volumes.

Strengths: Excellent value proposition, familiar Microsoft UX, strong Azure integration, active development roadmap.

Considerations: Less flexible than Tableau for custom visualizations, governance features require Premium licensing, best for Microsoft-centric environments.

Ideal For: Organizations heavily invested in Microsoft ecosystem, companies prioritizing cost-effectiveness, enterprises needing tight Azure integration.

Looker: Governance-First Analytics

![]()

Looker (now part of Google Cloud) provides strong governance through its LookML semantic modeling layer. Data teams define metrics centrally, ensuring business users work from consistent definitions while maintaining self-service flexibility.

Key Capabilities:

LookML Semantic Layer: Version-controlled business logic defining metrics, relationships, and calculations centrally. Changes propagate automatically to all users and dashboards.

Git-Based Workflow: LookML lives in version control enabling code review, testing, and deployment workflows familiar to engineering teams. Changes are auditable and reversible.

Embedded Analytics: Strong API and embedding capabilities for integrating analytics into applications. White-label dashboards for external stakeholders.

Data Modeling: Sophisticated modeling capabilities for complex business logic, hierarchies, and derived metrics.

Strengths: Best-in-class governance, version-controlled definitions, strong for embedded analytics, scalable semantic layer.

Considerations: Requires technical resources for LookML development, steeper learning curve for data modeling, tightly coupled to Google Cloud ecosystem.

Ideal For: Organizations prioritizing governance and consistent definitions, companies with engineering-led data teams, businesses needing embedded analytics.

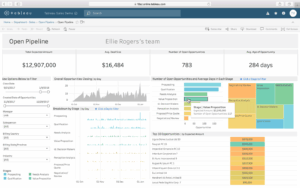

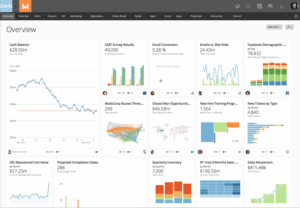

Domo: End-to-End Business Cloud

Domo combines data integration, storage, transformation, visualization, and collaboration in a single cloud platform. It’s particularly strong for organizations needing integrated ETL, storage, and analytics without assembling multiple tools.

Key Capabilities:

Integrated Data Management: Built-in ETL with 1000+ pre-built connectors, cloud data warehouse, and transformation capabilities. Single platform for end-to-end data workflows.

Mobile-First Design: Native iOS and Android apps with push notifications and mobile-optimized dashboards. Executives access insights anywhere without desktop requirements.

Business App Studio: Low-code environment for building custom applications on top of data. Create workflow apps, forms, and interactive experiences beyond traditional dashboards.

Collaboration Features: Social collaboration, alerts, scheduled reports, and commenting directly on visualizations.

Strengths: Comprehensive end-to-end platform, strong mobile experience, extensive connector library, rapid deployment.

Considerations: Higher total cost than specialized tools, less flexible visualizations than Tableau, proprietary platform creates some lock-in.

Ideal For: Organizations wanting all-in-one platforms, companies prioritizing mobile access, businesses needing rapid deployment without infrastructure.

BI Platform Comparison:

| Feature | Tableau | Power BI | Looker | Domo |

|---|---|---|---|---|

| Visualization flexibility | Excellent | Good | Good | Good |

| Ease of use | Moderate | Easy | Moderate | Easy |

| Governance | Good | Good | Excellent | Good |

| Integration | 70+ connectors | Microsoft-centric | Google Cloud-centric | 1000+ connectors |

| Pricing | Premium | Budget-friendly | Premium | Premium |

| Mobile experience | Good | Good | Good | Excellent |

| Best for | Visual storytelling | Microsoft shops | Governed metrics | End-to-end platform |

The core value proposition across all platforms: reducing time-to-insight by eliminating bottlenecks between business questions and data-driven answers. Organizations report that effective self-service BI enables users to generate insights independently within hours rather than waiting days or weeks for centralized data teams.

Semantic Layers and Data Virtualization Platforms

Semantic layers represent one of the most transformative yet underappreciated components of democratization architectures. These platforms sit between raw data systems and end users, translating complex technical schemas into business-friendly terms and unified metrics that non-technical stakeholders can understand and trust.

Think about what business users face without semantic layers: tables named fct_ord_ln_itm_dtl, columns like amt_1 and stat_cd, dozens of similar-sounding tables with unclear relationships, complex join logic requiring database expertise. The semantic layer abstracts this into business concepts: “Revenue,” “Active Customers,” “Product Categories,” “Order Status.”

Promethium: AI Insights Fabric for Instant, Governed Data Access

Promethium delivers the industry’s first agentic platform purpose-built to empower employees to simply talk to all their data. Unlike traditional semantic layer platforms that require extensive modeling upfront or data virtualization tools that add query latency, Promethium’s AI Insights Fabric provides universal, zero-copy access to enterprise data through natural language queries.

Core Architecture:

Universal Query Engine connects to cloud, on-premise, and SaaS sources—including Snowflake, Databricks, Microsoft Fabric, AWS, Azure, Oracle, SQL Server, PostgreSQL, and Salesforce—without requiring data movement or duplication. Queries execute in sub-seconds against live data using federated query capabilities powered by Trino with intelligent pushdown optimization.

360° Context Hub automatically aggregates technical and business metadata into a unified knowledge graph that bridges business questions to underlying data. This context engine provides:

- Complete data lineage across all connected sources

- Unified business definitions and semantic models from BI tools and data catalogs

- Golden queries and certified data patterns

- Organizational rules and tribal knowledge captured through human reinforcement

- Role-based access control enforced at query level

Mantra™ Data Answer Agent enables natural language interactions with enterprise data, autonomously creating, refining, and publishing reusable “Data Answers” that teams can share across workflows. The agent maintains memory across interactions, learning organizational context and user preferences over time. Multi-agent orchestration handles complex queries requiring planning, SQL generation, reasoning, and evaluation.

Key Differentiators:

Zero-Copy Architecture: Unlike Microsoft Fabric (requires OneLake migration) or traditional warehouses (require ETL), Promethium queries data where it lives. This eliminates data movement costs, reduces staleness, and simplifies governance by maintaining single authoritative sources.

AI-Native Design: Purpose-built for the agent era with native MCP (Model Context Protocol) and A2A (Agent-to-Agent) integration. Both human users and AI agents access data through the same governed, contextual interface.

Open by Design: Works with any data platform, BI tool, catalog, or agent. No vendor lock-in. Preserves and enhances existing technology investments rather than replacing them.

Instant Deployment: Production-ready in weeks, not months. Delivers immediate value through federated access before migrating or re-platforming becomes necessary.

Enterprise-Grade Security: SOC 2 Type II certified with encryption in transit and at rest, comprehensive audit trails, and customer-managed encryption keys. Data sovereignty maintained—customer data never leaves their environment.

Enterprise Impact:

Organizations implementing Promethium report:

- 10× faster response times for ad hoc business questions (days to minutes)

- 5× productivity increases for data teams, analysts, and business users

- 4 weeks or less time to production deployment

Customers including National Grid and Quest Diagnostics leverage Promethium to democratize data access while maintaining governance and compliance.

Ideal For:

Organizations with distributed data across multiple platforms, enterprises seeking to enable AI at scale, companies needing fast time-to-value without data migration, and teams requiring both human and AI agent access to governed data.

To learn more about our unique approach, download our latest white paper or schedule a demo today.

AtScale: Universal Semantic Layer Platform

AtScale provides a universal semantic layer platform that connects business users and AI agents to live cloud data without moving or duplicating information. AtScale’s semantic layer ensures consistent metric definitions across the organization.

Key Capabilities:

Unified Business Definitions: When someone references “revenue” or “customer lifetime value,” everyone calculates it identically rather than creating incompatible variations based on department-specific interpretations.

Live Query Connections: AtScale supports direct connections from Tableau, Power BI, Excel, and Python through standard protocols while maintaining consistent semantic definitions. Queries execute against cloud data warehouses with aggressive caching and pre-aggregation for performance.

AI Accuracy Improvements: Research demonstrates that semantic layers make GenAI 3× more accurate than direct SQL queries by providing rich business context and standardized definitions that large language models can leverage.

Ideal For:

Organizations with centralized cloud warehouses (Snowflake, Databricks, BigQuery) seeking to provide multiple BI tools with consistent semantic access, enterprises prioritizing metric standardization across departments, and companies deploying AI-powered analytics requiring business context.

Denodo: Mature Data Virtualization Platform

Denodo offers comprehensive data virtualization creating a virtual layer that abstracts underlying data sources regardless of structure, format, or location.

Key Capabilities:

Universal Integration: Connects to virtually any data source—relational databases, NoSQL stores, cloud platforms, SaaS applications, APIs, files—presenting them through unified virtual views.

Enterprise-Grade Performance: Sophisticated caching, query optimization, and parallel processing ensure acceptable performance for federated queries across distributed sources.

Mature Governance: Comprehensive security, access control, and audit capabilities meeting enterprise requirements for regulated industries.

Ideal For:

Large enterprises with complex, heterogeneous data landscapes requiring proven, mature virtualization capabilities, organizations in regulated industries needing comprehensive audit and compliance features, and companies with significant investment in traditional data infrastructure.

Platform Comparison:

| Capability | Promethium | AtScale | Denodo |

|---|---|---|---|

| Zero-copy access | ✓ Federated across all sources | ✓ Virtual layer, some caching | ✓ Virtual layer |

| Natural language queries | ✓ Mantra agent with memory | Limited | Limited |

| Data movement required | ✗ None | ✗ None (caches metadata) | ✗ None |

| AI-native architecture | ✓ MCP/A2A protocols | Developing | Limited |

| Deployment speed | 4 weeks typical | 8-12 weeks | 12-16 weeks |

| Primary use case | AI analyst | Cloud warehouse semantic layer | Enterprise virtualization |

Data Catalog and Metadata Management Tools

Data catalogs function as searchable inventories of organizational data assets, enabling users to discover what data exists, understand its meaning and quality, and evaluate whether it’s suitable for their analytical needs.

Without catalogs, democratization fails at discovery: users don’t know what data exists, can’t find relevant datasets, misunderstand what they’ve found, or duplicate analyses because they’re unaware of existing work.

Core catalog capabilities:

- AI-powered search enabling natural language queries to find relevant datasets

- Automated metadata harvesting that catalogs technical specifications and business definitions

- Data lineage tracking showing origins, transformations, and dependencies

- Quality scoring and freshness indicators helping users assess trustworthiness

- Collaborative annotations allowing SMEs to enrich metadata with contextual knowledge

- Usage analytics showing which datasets are most valuable and how they’re utilized

Alation: AI-Powered Collaborative Catalog

Alation pioneered the modern data catalog category and provides AI-powered search with strong collaborative features. The platform combines automated metadata harvesting with crowdsourced knowledge capture.

Key Capabilities:

Behavioral AI: Machine learning analyzes query patterns, user behavior, and popularity to recommend relevant datasets. The system learns what data experts use for specific questions.

Automated Metadata Harvesting: Connects to 70+ data sources to automatically catalog technical metadata including schemas, relationships, query patterns, and usage statistics.

Collaborative Features: Users can endorse datasets, add warnings, write documentation, and ask questions directly on catalog pages. Subject matter experts become visible stewards.

Data Health Scoring: Automated quality indicators show freshness, completeness, and popularity. Users quickly assess whether data is trustworthy and current.

Impact Analysis: Lineage tracking shows upstream sources and downstream dependencies. Understand impact before making schema changes.

Strengths: Strong collaborative features, behavioral AI recommendations, active user community, comprehensive lineage.

Considerations: Requires cultural adoption for collaborative features to add value, premium pricing, works best with engaged data stewards.

Ideal For: Organizations with strong data culture, companies prioritizing collaboration and knowledge sharing, enterprises needing comprehensive lineage.

Customer Impact: A major retail company reduced data discovery time by 50% through Alation’s automated metadata management and clear lineage tracking, enabling faster decision-making and improved product recommendations.

Collibra: Governance-First Data Intelligence

Collibra excels in governance-first approaches with granular controls essential for highly regulated industries like financial services and healthcare. The platform provides comprehensive policy management and workflow automation.

Key Capabilities:

Data Governance Framework: Built-in governance workflows for data stewardship, policy management, term approval, and compliance tracking. Formalized processes ensure accountability.

Business Glossary: Centralized repository of business terms, definitions, and relationships. Ensures organization-wide agreement on what metrics mean.

Policy Management: Define and enforce data handling policies including retention, privacy, access, and usage rules. Automated policy enforcement with exception workflows.

Compliance Automation: Pre-built frameworks for GDPR, CCPA, HIPAA, and other regulations. Track consent, lineage, and data subject rights systematically.

Data Quality Rules: Define quality dimensions, thresholds, and validation rules. Monitor quality metrics and trigger remediation workflows when issues arise.

Strengths: Comprehensive governance capabilities, strong for regulated industries, workflow automation, policy enforcement.

Considerations: Complex implementation requiring governance maturity, significant upfront configuration, premium pricing, best for large enterprises.

Ideal For: Highly regulated industries (financial services, healthcare), enterprises with formal governance requirements, companies prioritizing compliance over ease of use.

Informatica Enterprise Data Catalog

Informatica Enterprise Data Catalog offers deep integration with Informatica’s broader data management suite including MDM, data quality, and integration platforms. Strong for organizations already invested in Informatica ecosystem.

Key Capabilities:

AI-Powered Discovery: CLAIRE AI engine automatically discovers, classifies, and relates data assets. Identifies sensitive data (PII, PHI) for compliance purposes.

Cross-Platform Lineage: Tracks lineage across Informatica and external tools including databases, ETL platforms, BI tools, and cloud services.

Relationship Discovery: AI infers relationships between datasets based on structural similarity, join patterns, and usage analysis.

Informatica Integration: Native integration with Informatica PowerCenter, Cloud Data Integration, and Master Data Management for unified data governance.

Strengths: Deep Informatica ecosystem integration, strong AI discovery, comprehensive lineage, enterprise-grade scalability.

Considerations: Works best within Informatica ecosystem, complex pricing, requires Informatica expertise, less community-driven than Alation.

Ideal For: Organizations heavily invested in Informatica tools, enterprises needing unified governance across Informatica platform, companies with complex integration landscapes.

Open-Source Catalog Alternatives

Apache Atlas, Amundsen (developed by Lyft), and DataHub (developed by LinkedIn) provide cost-effective options for organizations with technical resources to deploy and maintain catalog infrastructure.

Apache Atlas: Hadoop-native catalog originally built for the Hadoop ecosystem. Strong integration with Hive, HBase, and related big data tools. Good for organizations with significant Hadoop investments.

Amundsen: User-friendly interface emphasizing data discovery and collaboration. Lyft open-sourced it after using internally to democratize data. Lightweight and modern architecture.

DataHub: LinkedIn-built platform emphasizing metadata relationships and real-time updates. Strong API-first design for custom integrations. Active development community.

Strengths: No licensing costs, full customization control, transparency into code, active open-source communities.

Considerations: Requires infrastructure and engineering resources to deploy/maintain, less polished user experience than commercial tools, community support versus vendor SLAs.

Ideal For: Organizations with strong engineering teams, companies prioritizing customization and cost savings, teams comfortable maintaining open-source infrastructure.

Data Catalog Comparison:

| Feature | Alation | Collibra | Informatica | Open-Source |

|---|---|---|---|---|

| Discovery | AI-powered | Policy-driven | AI-powered | Manual/basic |

| Governance | Collaborative | Comprehensive | Integrated | Limited |

| Ease of use | High | Moderate | Moderate | Low |

| Pricing | Premium | Premium | Premium | Free (+ infrastructure) |

| Best for | Collaboration | Compliance | Informatica shops | Custom needs |

Selection Considerations:

Evaluate catalogs based on integration depth with your data platforms, ease of use for non-technical users, automated versus manual metadata enrichment, lineage tracking capabilities across tools, governance framework alignment, and total cost including implementation and maintenance.

Data Integration and Pipeline Platforms

Data integration tools streamline extraction, transformation, and loading of data from diverse sources into centralized repositories where democratization platforms can access it.

Airbyte: Open-Source Integration Leader

Airbyte offers 600+ pre-built connectors spanning databases, SaaS applications, APIs, and file systems. Its open-source foundation provides flexibility and eliminates vendor lock-in, while the AI-powered Connector Builder enables custom integrations in minutes.

Strengths:

- Massive connector library with active community contributions

- Open-source transparency and no vendor lock-in

- Support for both batch and real-time CDC pipelines

- Sub-5-minute synchronization for real-time needs

Ideal For: Organizations prioritizing flexibility and customization, companies with technical resources to deploy open-source infrastructure, and teams needing custom connectors for unique data sources.

Fivetran: Fully Managed Integration

Fivetran delivers fully managed, zero-maintenance data replication with 400+ connectors and strong automation for error handling and schema evolution.

Strengths:

- Turnkey solution requiring minimal configuration

- Automatic schema drift handling

- High reliability with proactive monitoring

- Excellent for organizations prioritizing simplicity over customization

Ideal For: Organizations preferring managed services over self-hosted infrastructure, teams lacking dedicated data engineering resources, and companies valuing reliability over cost optimization.

Rivery: All-in-One Cloud Platform

Rivery (recently acquired by Boomi) provides an all-in-one cloud-native ELT platform combining data integration, transformation, and orchestration with 200+ source connectors.

Strengths:

- Visual no-code interface works alongside SQL and Python

- Reusable “Logic Rivers” and prebuilt data model kits

- Integrated orchestration and workflow automation

- Strong for hybrid technical/business user teams

Ideal For: Organizations needing integrated ETL/ELT and orchestration, teams with mixed technical skill levels, and companies seeking visual workflow builders.

Integration Platform Comparison:

| Feature | Airbyte | Fivetran | Rivery |

|---|---|---|---|

| Connector count | 600+ | 400+ | 200+ |

| Deployment | Self-hosted or cloud | Fully managed | Fully managed |

| Pricing model | Free (open-source) + cloud | Per-connector + usage | Tiered by volume |

| Customization | High (open-source) | Low | Medium |

| Best for | Custom needs, flexibility | Simplicity, reliability | Integrated workflows |

These integration platforms are critical for democratization because they ensure data flows reliably from operational systems into centralized repositories where semantic layers, catalogs, and BI tools provide governed, self-service access.

Tool Selection Criteria: Evaluating Platforms Systematically

Selecting appropriate tools requires evaluating multiple dimensions aligned to organizational needs, existing infrastructure, and user capabilities.

Ease of Use and User Experience

Adoption is the primary success metric—if tools are too difficult for target users, democratization initiatives fail regardless of technical sophistication.

Platforms should provide:

Intuitive interfaces suitable for non-technical users without excessive training. Natural language query capabilities enabling conversational interactions. Pre-built templates accelerating time-to-first-insight. Progressive disclosure where advanced features don’t overwhelm novice users.

Evaluation approach:

Conduct hands-on trials with actual end users—not just technical evaluators. Business users quickly reveal usability issues that technical teams miss. Test common workflows: finding relevant data, creating simple visualizations, applying filters, sharing results.

Domo and Power BI rate highly for usability with drag-and-drop visualizations and familiar interfaces. Promethium’s natural language Mantra agent provides conversational simplicity for users uncomfortable with traditional BI tools.

Integration Capabilities and Ecosystem Compatibility

Modern organizations operate with diverse technology stacks spanning cloud platforms, on-premise systems, and SaaS applications. Democratization platforms must integrate seamlessly with:

- Existing data warehouses: Snowflake, Databricks, Redshift, BigQuery, Microsoft Fabric

- BI and analytics tools: Tableau, Power BI, Looker, Excel, Python, Jupyter

- Data catalogs: Alation, Collibra, Unity Catalog, Purview

- Governance and security systems: SSO, RBAC, audit logging, encryption

Evaluation approach:

Don’t accept “yes, we integrate” at face value. Test integration depth: Does the platform support federated queries or require data copies? Can it handle both structured and unstructured data? Does it integrate with emerging AI frameworks and agent architectures?

AtScale excels in multi-tool compatibility supporting live query connections through standard protocols. Promethium provides open architecture with REST, SQL, JDBC, and LLM integration APIs ensuring compatibility with existing modern data stacks. Proprietary platforms like Microsoft Fabric work exceptionally well within the Microsoft ecosystem but require careful evaluation for cross-platform environments.

Scalability and Performance

Democratization must scale efficiently as data volumes grow, user counts increase, and query complexity expands.

Key scalability considerations:

Cloud-native architecture enabling elastic scaling without infrastructure constraints. Query optimization including caching, pre-aggregation, and intelligent pushdown to underlying sources. Multi-cloud support for organizations with distributed data across AWS, Azure, and Google Cloud. Performance under concurrent load maintaining sub-second response times with hundreds of simultaneous users.

Evaluation approach:

Test with realistic data volumes and concurrent user counts. Don’t accept vendor-provided benchmarks—run your own tests with your data and query patterns. Evaluate cost scaling: does performance require linearly increasing infrastructure spend?

Snowflake provides exceptional scalability through cloud-native separation of storage and compute. Promethium delivers scalable federated query execution with intelligent pushdown optimization. Denodo offers mature performance tuning for complex virtualization scenarios.

Governance and Security Capabilities

Democratization without governance creates compliance risks, data quality issues, and security vulnerabilities.

Essential governance features:

Role-based and attribute-based access control ensuring users access only authorized data. Row and column-level security restricting sensitive information within datasets. Complete data lineage tracking dependencies and transformation logic. Comprehensive audit logging documenting who accessed what data when. Automated data quality tracking with profiling and anomaly detection.

Evaluation approach:

Test granularity of access controls: can you restrict by role, department, project, and data sensitivity simultaneously? Evaluate audit capabilities: can you reconstruct complete access history for compliance reporting? Verify integration with existing security infrastructure: SSO, MFA, encryption.

Collibra leads in governance-centric approaches for regulated industries. Promethium enforces query-level, role-aware access control with complete audit trails while maintaining SOC 2 Type II certification. Denodo provides comprehensive enterprise security meeting stringent compliance requirements.

Cost and Total Cost of Ownership

Pricing models vary dramatically across platforms, from per-user subscriptions to consumption-based billing to capacity pricing.

Total cost of ownership includes:

- Licensing costs: Upfront fees, per-user subscriptions, or consumption-based pricing.

- Infrastructure costs: Cloud compute, storage, and network expenses.

- Implementation costs: Professional services, consulting, custom development.

- Training costs: User education, certification programs, ongoing support.

- Maintenance costs: System administration, upgrades, troubleshooting.

Evaluation approach:

Calculate total cost over 3-5 years including all categories above. Compare against expected benefits: faster decisions, reduced analyst workload, improved business outcomes. Don’t optimize for lowest licensing cost while ignoring implementation and maintenance expenses.

Implementation Best Practices: Beyond Tool Selection

Technology alone does not guarantee democratization success—organizations must prepare data, train users, and establish governance alongside platform deployment.

Data Must Be Organized and Prepared

Democratization amplifies the impact of data quality issues—poor data accessible to more people creates more poor decisions.

Critical preparation steps:

Data profiling and assessment to identify inconsistencies, missing values, duplicates, and anomalies. Systematic cleansing addressing formatting issues, standardizing naming conventions, and correcting errors. Integration and enrichment merging datasets from disparate sources into unified views. Validation and quality scoring establishing metrics indicating trustworthiness. Comprehensive documentation providing context about origins, update frequencies, and known limitations.

Organizations implementing robust data preparation report 40% faster insight discovery and significantly improved decision quality. Modern platforms increasingly incorporate AI-powered profiling and automated anomaly detection.

Users Must Be Trained in Data Literacy

Even with intuitive tools and high-quality data, users need skills to interpret information correctly, understand analytical limitations, and derive valid conclusions.

Effective training programs include:

Role-based learning paths tailored to skill levels (casual users, power users, analysts). Hands-on exercises using real organizational data and business scenarios. Ongoing support through office hours, communities of practice, and advanced workshops. Certification programs recognizing skill development. Just-in-time resources like embedded help, video tutorials, and contextual tips within tools.

Organizations tracking literacy improvements alongside tool adoption report higher utilization rates, greater confidence in insights, and measurable business impact.

Governance Policies Must Be Established

Implementing tools without clear governance creates chaos rather than democratization.

Essential governance elements:

Data classification schemes categorizing sensitivity levels. Clear access policies defining who can view, modify, or share different data types. Data stewardship roles assigning accountability for quality and compliance. Usage guidelines documenting appropriate analytical practices. Compliance frameworks ensuring GDPR, HIPAA, CCPA adherence.

Governance should enable rather than constrain—automated enforcement with user autonomy, protection balanced with usability.

Phased Rollout and Continuous Iteration

Rather than attempting organization-wide deployment immediately, successful implementations follow staged approaches:

- Pilot with limited scope: Deploy to small group with well-defined use cases

- Gather feedback and refine: Iterate based on real usage patterns

- Expand gradually: Scale to additional departments as processes mature

- Measure and optimize continuously: Track adoption, impact, and satisfaction

This phased approach de-risks implementation, enables learning from early deployments, and builds organizational confidence before full-scale rollout.

The Integrated Platform Approach

While each tool category serves distinct functions, the most effective democratization strategies integrate platforms into cohesive ecosystems.

Example Integrated Stack:

Airbyte or Rivery ingests data from operational systems into Snowflake or Databricks. Promethium federates access across these and other distributed sources without data movement, providing natural language query capabilities. AtScale or Promethium’s 360° Context Hub establishes semantic relationships with unified business definitions. Alation or Collibra catalogs available datasets with rich metadata. Tableau, Power BI, or Domo connects to these governed semantic views, enabling business users to create visualizations.

This integration ensures data flows reliably from sources to destinations, governance policies enforce consistently, semantic definitions remain unified, and users interact through interfaces matched to their skill levels.

Conclusion: Technology as Enabler, Not Solution

Data democratization platforms provide essential infrastructure for self-service analytics—but tools alone do not guarantee success. Organizations must combine appropriate technology with data preparation, user training, robust governance, and cultural transformation that values data-driven decision-making.

When evaluating platforms, prioritize ease of use for target users, seamless integration with existing infrastructure, scalability to grow with organizational needs, comprehensive governance capabilities, and transparent total cost of ownership. Run pilot implementations with clear success metrics, iterate based on user feedback, and scale gradually as processes mature.

The platforms that succeed in enabling democratization—whether comprehensive solutions like Promethium’s AI Insights Fabric, specialized semantic layers like AtScale, mature virtualization like Denodo, or integrated BI platforms like Domo and Power BI—are those that balance powerful capabilities with accessibility, technical sophistication with business usability, and broad data access with appropriate governance.

Select tools that align with your architecture (centralized versus distributed), your users (technical versus business), and your timeline (weeks versus months). Most importantly, remember that technology enables democratization—but people, process, and culture determine whether it succeeds.

If you are curious to learn more about Promethium, reach out to one of our experts today.